Prepare for more frequent BIND security updates

Plan for more frequent BIND security updates, at least for the remainder of 2026.

Read postAt ISC, we sincerely value the contributions of our users, and security researchers, who analyze and probe our software for vulnerabilities. BIND, in particular, has had a long career, and the BIND project has issued 6 - 7 CVEs on average for the past 26 years. Since 1999, we have published 186 BIND vulnerabilities, so we have ample experience with vulnerability reporting, triage, and remediation.

Since the start of 2026, we have seen a sharp uptick in reports of potential security vulnerabilities. Other open source projects popular with security researchers have been experiencing the same surge in reports, mostly due to the easy access to LLM tools for code analysis.

Like others have, we have found a high incidence of false positives in these reports, and the burden of triaging one alarming report after another has severely impacted our team. Currently, we are still accepting reports from anyone, and doing our best to reproduce the ‘findings’ but we may have to stop accepting reports without a working reproduction test. But first, a little more data.

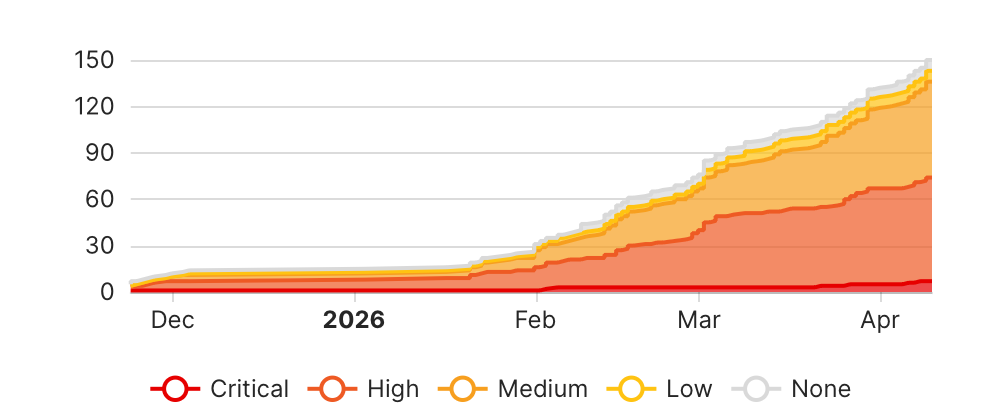

For example, here is a nice chart from the YesWeHack project for BIND, graciously funded as part of the FOSSEPS Preparatory Action, managed by the European Commission. The project was launched in December 2025, and we recently paused it, in order to give ourselves time to ‘catch up’ with the influx of reports.

The above chart shows the explosion of reports from just this one source, the YesWeHack program, with a total now of 150 vulnerability reports. Of these, we have determined that 8 are valid reports, and a further 8 are still under investigation. All the rest turned out to be false positives. This is a decent benchmark of the rate of invalid reports to valid reports, because these reporters are well motivated, and operating under fairly strict rules established by the YWH platform. The timeframe also overlaps nicely with the timeframe for LLM-assisted security research, so it is a good benchmark of the impact of LLMs.

| Total Reports to date from YWH | Accepted, actionable reports | Still in triage | Dismissed reports |

|---|---|---|---|

| 150 | 8 | 8 | 134 (89%) |

Volume-wise, this is about as many reports in three months as we might normally see in ten years, with a high rate of false positives. Is this an emergency for us? Absolutely. Are we committed to riding this wave, and staying on the board - yes. Our users should brace for a record number of security releases in 2026 and 2027, not only from us, but from all your open source providers.

One thing that the LLMs have changed is, there are now a lot more people hunting for vulnerabilities. The LLMs have made it much easier to generate a fancy, credible-looking report, and it is now easier to generate a report than it is to read and assess it. The result is a kind of asymmetrical burden on the maintainers. I am hopeful that in a few months the LLMs will have improved to the point that they may be helpful in finding solutions, rather than just finding problems, but at the moment, to lean on an analogy from the current state of warfare, we are fighting manufactured drone swarms with an arsenal of a very few hand-crafted missiles (our staff).

Here is some recent data on BIND issues marked as confidential, which is the best way to identify our current queue of potential security vulnerabilities. These are the confidential issues as of April 15, 2026. The number obviously changes daily, as new issues are submitted, and some of the existing issues are investigated and found to be false reports.

| Reported by | # of confidential issues |

|---|---|

| Security researchers | 21 |

| YesWeHack | 12 |

| Internal maintainers | 15 |

| Actual open source users | 5 |

Those attributed to security researchers represent a relatively small number of people who tend to investigate a class of possible weaknesses and report several issues at once. A lot of these historically have tended to be industry-wide DNS protocol weaknesses, which are more difficult to remediate, and often require coordination with other open source DNS software systems. Mostly, these people are motivated by the desire to publish a technical paper, and/or present at a technical conference for career advancement.

The YesWeHack reporters are a mixed group, but the successful reports have come from a handful of investigators, who are working for the bounties provided by our sponsor. All of those we plan to fix as security vulnerabilities, and some we are still investigating, we have moved into our repo as confidential issues.

The minority of issues are reported by actual users of our open source, and a couple of these are really misunderstandings, but we haven’t closed the issues yet in case the users have further questions. These are the least likely to turn out to be actual vulnerabilities, and often do not include as much detail as reports from researchers, but nevertheless we take them very seriously.

| Reported by | Average issue duration (months) |

|---|---|

| Security researchers | 7 |

| YesWeHack | 0 |

| Internal maintainers | 25 |

| Actual open source users | 7 |

From the data table above, you can see that the longest open issues are those opened by the BIND maintainers themselves. These are fairly likely to be actually valid reports, because they are opened by people with very specific expertise, who are not motivated to spam the project: yet we struggle to find the time to fully investigate these, because of the volume of external reports. We are still triaging the older issues, so obviously, if we thought they were specifically dangerous, we would have addressed them sooner.

The problem with these LLM-generated security reports is, the code analysis on its own is not enough to determine an actual vulnerability. A finding by code analysis is merely the starting point for a good security report.

These findings are factually incorrect about the code behavior. This is the most common LLM error type we see.

Examples:

PROXY Protocol Address Spoofing (proxystream.c, proxyudp.c) – reported as HIGH severity. This is Not exploitable. The scanner claims “no built-in authentication or ACL check on who is allowed to send PROXYv2 headers.” This is wrong:

- PROXY protocol requires explicit opt-in via

listen-on ... proxy plain(not enabled by default)allow-proxyACL defaults tonone(deny all), documented in ARM- ACL enforcement uses the real peer address, not the spoofed PROXY address (

lib/ns/client.c:2231-2234)

ISCCC GETC16/GETC32 Out-of-Bounds Read (isccc/util.h) – reported as HIGH. This is Not exploitable. The

GETC16andGETC32macros are dead code – never called anywhere in the codebase. The macros that ARE used (GET8,GET16,GET32) have proper bounds checking in every caller withinlib/isccc/cc.c. Additionally, the rndc control channel has IP-based ACL, 32KiB message limit, and TSIG authentication before any parsing occurs.

These findings describe real code behavior, but existing mitigations prevent exploitation.

Examples (we have many, many more):

HTTP/2 Stream Exhaustion (http.c) – reported as HIGH. The 50-stream window before flood detection is real However: DoH requires explicit opt-in (

listen-on ... http), per-stream memory is bounded (65535 bytes max, Content-Length validated before allocation), and multiple layers of quotas exist (tcp-clients,http-listener-clients,http-streams-per-connection, idle timeouts). Worst-case memory per connection is ~6.5MB, bounded by connection limits.

HIP Integer Overflow (hip_55.c) – reported as MEDIUM False positive.**

hit_lenisuint8_t(max 255),key_lenisuint16_t(max 65535). Sum is 65790, trivially fits insize_t. Both zero-length checks are present. DNS RDLENGTH (16-bit) further constrains values.

Examples:

ACL Mutex Deadlock on Recursive ACLs (acl.c) This is Real but LOW severity.

insecure_prefix_lockis a non-recursive mutex (PTHREAD_MUTEX_ADAPTIVE_NPon Linux). Nested ACLs innamed.confwill deadlock during configuration loading. This is a self-DoS from the administrator’s own configuration, not remotely triggerable. Only affects thedns_acl_isinsecure()warning function, not access control decisions.

fromwire_keydata No Minimum Length (keydata_65533.c) Real but LOW severity. Accepts zero-length KEYDATA records. However, KEYDATA (type 65533) is a private, non-standard type used only internally for RFC 5011 trust anchor management, not received from external sources in normal operation.

tostruct_keydatahas defensive checks.

These are the most upsetting reports, where the proposed ‘vulnerability’ is just how the software is intended to work. We can only assume these reporters didn’t even read their reports critically before submitting them.

Example:

RRL TCP Credit Bypass (rrl.c) – reported as HIGH This is By design. TCP responses are intentionally exempt from rate limiting because TCP requires a completed handshake proving the client owns its source IP. An attacker spoofing UDP source IPs for amplification cannot complete a TCP handshake from those IPs. The TCP credit only disables QPS scaling – base per-client rate limits still apply fully.

Quite a few reports identify “vulnerabilities” that are found in test harnesses, fuzz drivers and utility scripts. These include hardcoded keys in fuzz harnesses, stack allocations in test clients, deprecated APIs in test vectors, etc. None affect production deployments.

MOST OF THESE FALSE POSITIVES CAN BE AVOIDED BY SIMPLY REPRODUCING THE SUPPOSED PROBLEM IN AN ACTUAL SOFTWARE TEST.

We have published several documents that we hope help researchers assessing our software security:

We would like to offer some tips on making your security reports more useful, for those reporters who are motivated to actually help open source security.

First the basics: while we accept reports via email, we also get a tremendous amount of spam, and our spam filters sometimes reject security reports sent via email. If you have a serious report, it is worth taking the time to make an account on our open GitLab repository and submit an issue there. This has the further advantage that, having submitted the issue yourself, you will be copied on all the updates and be able to work with us as we triage and attempt to reproduce your report.

In our repository, we have a form designed specifically for reporting security issues. I know everyone thinks the forms are for ‘other people’, we all want to skip the annoying form, but in this case, skipping the form just forces us to ask you the same questions again, after you open your issue, because we actually need the information on the form. When you open a new issue, there is a pulldown to select a form for a bug submission, feature request, or security issue. One further benefit of using the security issue form is that the issue is automatically marked as CONFIDENTIAL. It is important to mark issues as confidential that may turn out to be vulnerabilities, yet even self-described researchers sometimes submit these in the open. (We got a security vulnerability reported in the open in our repo earlier this week, which we closed as soon as we saw it.)

Here is the Security Issue form:

If you found the problem using generative AI tools and you have verified it yourself to be true: write the report yourself and explain the problem as you have learned it. This makes sure the AI-generated inaccuracies and invented issues are filtered out early before they waste more people’s time. Even if you write the report yourself, you must make sure to reveal the fact that the generative AI was used in your report.

As we take security reports seriously, we investigate each report with priority. This work is both time- and energy-consuming and pulls us away from doing other meaningful work. Fake and otherwise made-up security problems effectively prevent us from doing real project work and make us waste time and resources.

We ban users immediately who submit fake reports to the project.

Concisely summarize the bug encountered, preferably in one paragraph or less.

Make sure you are testing with the latest supported version of BIND. See https://kb.isc.org/docs/supported-platforms for the current list. The latest source is available from https://www.isc.org/download/#BIND

Paste the output of named -V here.

Is a specific setup needed?

Please check the BIND Security Assumptions chapter in the ARM: https://bind9.readthedocs.io/en/latest/chapter7.html#security-assumptions

E.g. DNSSEC validation must be disabled, etc. E.g. Resolver must be configured to forward to attacker’s server via DNS-over-TLS, etc. E.g. Authoritative server must be configured to transfer specific primary zone. E.g. Attacker must be in posession of a key authorized to modify at least one zone. E.g. Attacker can affect system clock on the server running BIND.

What resources does an attacker need to have under their control to mount this attack?

E.g. If attacking an authoritative server, does the attacker have to have a prior relationship with it? “The authoritative server under attack needs to transfer a malicious zone from attacker’s authoritative server via TLS.”

E.g. If attacking a resolver, does the attacker need the ability to send arbitrary queries to the resolver under attack? Do they need to also control an authoritative server at the same time?

Who or what is the victim of the attack and what is the impact?

Is a third party receiving many packets generated by a reflection attack?

If the affected party is the BIND server itself, please quantify the impact on legitimate clients: E.g. After launching the attack, the answers-per-second metric for legitimate traffic drops to 1/1000 within the first minute of the attack.

This is extremely important! Be precise and use itemized lists, please.

Even if a default configuration is affected, please include the full configuration files you were testing with.

Example:

Use the attached configuration file

Start the BIND server with command: named -g -c named.conf ...

Simulate legitimate clients using the command dnsperf -S1 -d legit-queries ...

Simulate attack traffic using the command dnsperf -S1 -d attack-queries ...

Examples: Legitimate QPS drops 1000x. Memory consumption increases out of bounds and the server crashes. The server crashes immediately.

If the attack causes resource exhaustion, what do you think the correct behavior should be? Should BIND refuse to process more requests?

What heuristic do you propose to distinguish legitimate and attack traffic?

Please provide log files from your testing. Include full named logs and also the output from any testing tools (e.g. dnsperf, DNS Shotgun, kxdpgun, etc.)

If multiple log files are needed, make sure all the files have matching timestamps so we can correlate log events across log files.

In the case of resource exhaustion attacks, please also include system monitoring data. You can use https://gitlab.isc.org/isc-projects/resource-monitor/ to gather system-wide statistics.

Issues affecting multiple implementations require very careful coordination. We have to make sure the information does not leak to the public until vendors are ready to release fixed versions. If it is a multi-vendor issue, we need to know about the situation as soon as possible to start the (confidential!) coordination process within DNS-OARC and other suitable fora.

Please list implementations you have tested.

Have you informed other affected vendors? Or maybe submitted a paper for review?

E.g. we plan to go public during conference XYZ on 20XX-XX-XX

Please specify whether and how you would like to be publicly credited with discovering the issue. We normally use the format: First_name Last_name, Company_or_Team.

One advantage we have with BIND, is that it is an application, not a module or library, and therefore, it is relatively easy to test the proposed vulnerability on a real system to determine whether it is actually expoitable. Surprisingly few of these LLM-enabled bug reporters seem to bother to do this, unfortunately. Still fewer include complete instructions for reproducing the issue in a real system. Please don’t insist on using some weird Docker you cooked up or a proprietary configuration you cannot share. Please don’t share some fake ’test script’ that you haven’t actually tried to run yourself - the LLMs will happily generate a test that proves nothing.

We have gotten reports that sincerely state that there is a serious problem, when anyone who understands how DNS works - would know that the observed behavior is exactly as designed. For example, one recent report boiled down to “the authoritative server for a domain can update nameservers for that domain over TCP”. Sure, the LLM claimed that if someone could compromise the authoritative server, they could update the authoritative information, but any human reader would know, this is simply how the DNS works.

Another report we just got claims cache poisoning. Now, this would be a truly alarming vulnerability - much worse than our usual DDOS. But in order to accomplish this attack, the attacker needs to be able to spoof responses between the resolver and authoritative server, which basically means, the entire system is compromised. This is not a software bug.

Please don’t submit reports like this. We are wading through dozens of these reports, on an urgent basis, and some of them are real. When we read one that the submitter clearly hasn’t even bothered to read themselves, well, it is very frustrating. We don’t want to have to ban reporters but we may soon start to ban reports from people who repeatedly waste our time with complete nonsense.

I don’t think we have ever had a security report submitted that included both a system test reproducer and a serious, usable working patch, but we dream about it. Maybe someone reading this will make history and do that??

Here is an example of a security issue, that did turn out to be a CVE, that we have patched and published. This is a good example of a terse, but complete and effective report that used the form. (If you click on the link above you will see the actual issue in our repository, which includes our standard checklist for patching and publishing a vulnerability.)

Authoritative servers with a KEY RR in a zone or validating resolvers are vulnerable to trivial CPU exhaustion attacks using SIG(0) protocol.

All versions with SIG(0) support. Tested configuration:

BIND 9.19.19-dev (Development Release) <id:de2009e>

compiled by GCC 13.2.1 20230801

compiled with OpenSSL version: OpenSSL 3.1.4 24 Oct 2023

linked to OpenSSL version: OpenSSL 3.1.4 24 Oct 2023

DNSSEC algorithms: RSASHA1 NSEC3RSASHA1 RSASHA256 RSASHA512 ECDSAP256SHA256 ECDSAP384SHA384 ED25519 ED448

DS algorithms: SHA-1 SHA-256 SHA-384

HMAC algorithms: HMAC-MD5 HMAC-SHA1 HMAC-SHA224 HMAC-SHA256 HMAC-SHA384 HMAC-SHA512

TKEY mode 2 support (Diffie-Hellman): no

TKEY mode 3 support (GSS-API): yes

Attacker simply needs to send a signed message which refers the existing KEY RR in one of the zones OR in cache. The signature can be invalid (any syntactically valid message is sufficient).

The impact gets higher with number of KEY RRs on the same name - it seems we are trying all KEY RRs until we find a match or exhaust all options.

Values were measured on build from the bind-9.18 branch, listed as thousands QPS, named running on a single CPU core to make measurement easier.

| State | QPS [k] |

|---|---|

| legitimate cache hits - before the attack | 70 |

| attack | 1 |

| legitimate traffic when the attack is ongoing | 10 |

Beware: Test over UDP. TCP also suffers from #4481 which throws results off.

Configure BIND to be authoritative for a zone containing KEY RR:

named -g -c named.conf -n1 (single thread to simplify measurement)yes '. A' | dnsperf -S1 -O suppress=timeout -c 256python udploop.py 127.0.0.1 53 sig0-bad-query.tcpdns --report-interval 1Please note the binary containing queries does NOT have a valid signature. It’s a valid message which pretends to be signed with the KEY RR from the zone file, but it’s not a valid signature.

Probably limit on resources we are willing to spend on SIG(0), or expensive crypto in general.

Possibly also smaller TCP buffer size (or smaller buffers elsewhere, I don’t exactly know where the messages are being buffered).

This particular variant of attack logs one message per query:

request has invalid signature: RRSIG failed to verify (BADSIG)

We do recognize that the new, powerful AI-enabled tools will find many software vulnerabilities that have remained hidden for years. We want to find these, and fix them, as soon as possible, because we know that adversaries can also use these tools. Please, if you are doing security research on open source software, consider whether you are willing to take the time to make reports that actually help the open source project you are submitting them to. If it is not worth your time to verify the hypothesis you have generated with a real test, please do not burden others with that task.

What's New from ISC